Worklog: Optimising GEMM on NVIDIA H100 for cuBLAS-like Performance (WIP)

🚧 Work in progress. Please reach out on LinkedIn if you spot any mistakes.

Introduction

Matrix multiplication sits at the core of modern deep learning. Whether it is transformers, CNNs, or even simple MLPs, everything eventually reduces to GEMM. GPUs are built to run this operation at scale, and libraries like cuBLAS set the performance bar with kernels tuned down to the last instruction.

In this blog I am rebuilding that path from the ground up on the NVIDIA H100. I start with the most basic kernels and gradually layer on optimisations: tiling into shared memory, register blocking, vectorisation, warp tiling, and then Hopper-specific features such as tensor cores, the Tensor Memory Accelerator, and more. This project is inspired by the great work of Pranjal Shankhdhar and Simon Boehm, and I try to build on from there with my own contributions, exploring the full optimisation path while providing a consistent and reproducible repository for replicating results. In the first seven kernels, we will use FP32 precision exclusively. In this phase, I wanted to focus on the fundamental optimisation techniques that form the bedrock of GEMM performance tuning and are largely architecture-independent. Using FP32 simplifies debugging with Nsight Compute and keeps PTX and SASS inspection clean. Once we enter the second phase, leveraging Tensor Cores and H100 specific features, we will transition to mixed precision. At that point, all benchmarks will compare against cuBLAS with Tensor Cores enabled, whereas in this first phase we compare against cuBLAS running in pure FP32 mode (Though they are also implemented in mixed precision in repo).

The goal is not just raw speed. It is to see what each change actually buys us, what the profiler tells us at every step, and how a kernel evolves from naive to highly tuned. By the end we will measure how close hand-rolled CUDA can get to cuBLAS, and whether on fixed matrix sizes we can even edge past it.

The full code is on my GitHub. All code is available on my GitHub with support for FP32 and BF16+FP32 mixed precision.

Let’s get started.

H100 Architecture

Before we get into the code, it helps to ground ourselves with a clear mental model of the GPU’s internal hardware components. Understanding the memory hierarchy within the GPU, the distinction between on-chip and off-chip memory in terms of size and latency, and the new components introduced with the Hopper architecture family will make everything that follows much easier to reason about. I won’t discuss the CUDA programming model in this section just yet; instead, I’ll introduce concepts incrementally as we move through the kernels, since I like to think of this more as a worklog. This section, then, serves more as a primer. The figure below adapted from Aleksa's post with an extension, shows the full architecture in detail.

At the top-level, the H100 is organised into multiple Graphics Processing Clusters (GPCs), we have 8 GPCs in total, and each GPC contains 18 Streaming Multiprocessors (SMs). Each four GPCs connect directly to one L2 partition and the other to second partition. These SMs hold the main compute units present on the chip along with some "on-chip" memory components. The SXM of the H100 has 132 SMs (the one I use here), while the PCIe has 114. Should actually be more than 132 SMs since 8 * 18 = 144; however, that 144 corresponds to the full GH100 die. In practice, some SMs are fused off, leaving 132 functional SMs in the SXM variant Modern GPUs such as the H100 are enormous and extremely complex pieces of silicon, making it nearly impossible to manufacture them without defects. Even a single faulty SM would make the entire chip unusable. To avoid this waste, NVIDIA fuses off defective or partially defective SMs, allowing the chip to function normally with fewer SMs. This process improves manufacturing yield. . Here is a more detailed view inside an SM:

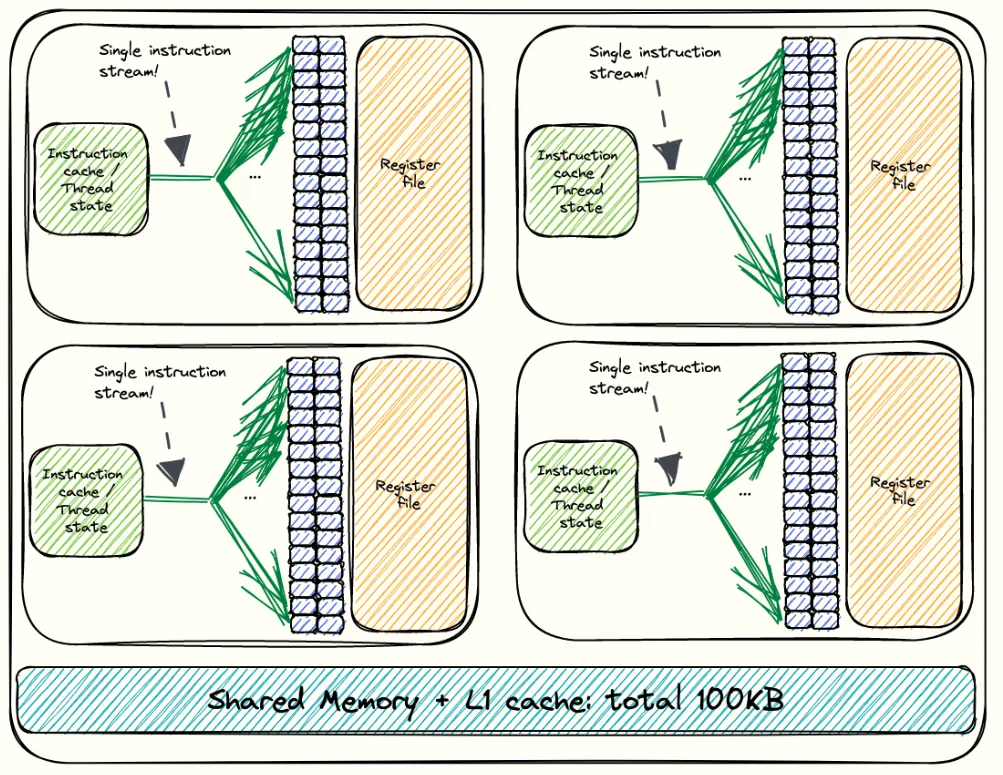

Inside the SM, there are four partitions as shown in the illustration above. Each SM contains the following key resources:

CUDA cores: These handle the standard floating-point operations (FLOPS) and Integer operations (IOPS).

- 128 FP32 (full-precision) CUDA cores, divided logically across the four partitions (32 / partition).

- 64 INT32 cores dedicated to integer and control operations (16 / partition).

- 64 FP64 (double-precision) cores used for high-accuracy compute (16 / partition).

Fourth-generation Tensor Cores: Each SM includes 4 of these specialised units. They are designed for high-throughput matrix-multiply-accumulate operations and are essential for achieving the peak performance of modern GPU workloads.

Load/Store (LD/ST) Units: These are responsible for moving data between the SM and the memory hierarchy.

SFU Units: Handles complex math operations such as

sin,cos,sqrt, andexp, offloading this work from the CUDA cores. Each SM partition has its own SFU, enabling these operations to run in parallel with regular arithmetic. If we encounter SASS instructions that begin withMUFU(e.g.,MUFU.SQRT,MUFU.EX2), these are executed by the SFUs.Dispatch Units: These act as the bridge between the warp schedulers and the execution pipelines. Once a warp scheduler selects a warp and its next instruction, the dispatch unit routes that instruction to the appropriate functional unit within the SM. Each SM partition has its own dispatch unit, allowing multiple instructions from different warps to be issued simultaneously to different execution units.

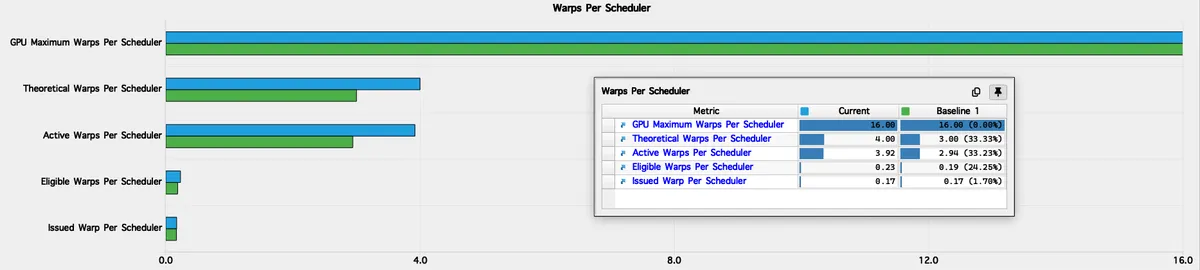

Warp Schedulers: Each SM includes four warp schedulers (one per partition), each responsible for issuing instructions to warps — groups of 32 threads (more on that later!). A warp scheduler can issue only one instruction per clock cycle to a single warp. Across the four partitions, an SM can therefore issue up to four warp instructions per cycle, meaning 128 threads can execute in parallel at any given moment. To keep all schedulers fully utilised, we want enough active warps per block so that none sit idle. This is why we generally avoid launching fewer than 128 threads per block, as it ensures that every scheduler has a warp to work with. In practice, an SM can host multiple thread blocks and can pull warps from another block if needed, but it’s still a good heuristic to keep this in mind in cases where the SM happens to have resources for only a single block.

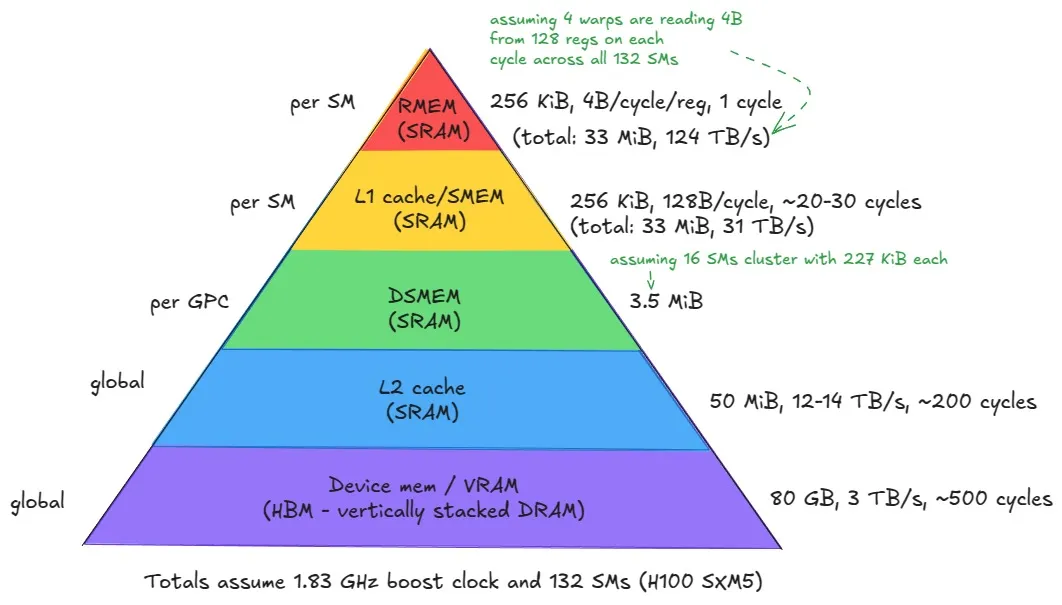

Now let’s look at the memory hierarchy, where each memory type physically resides within the GPU and how they differ in terms of access latency. Once again I will adapt the pyramid figure from Aleksa's post.

Let’s start from the bottom of the hierarchy and work our way upward, moving from the largest and slowest memories to the smallest and fastest ones.

- Global Memory (GMEM) / Device Memory (VRAM): This is the large off-chip memory sitting on the GPU package, made up of stacked HBM3 DRAM. It is generally not located on the same die as the SMs, though in modern data centre GPUs like the H100, it resides on a shared interposer alongside the GPU die to reduce latency and increase bandwidth. It uses Dynamic RAM (DRAM) cells, which are slower but denser than the Static RAM (SRAM) used in caches and registers. This memory offers the highest capacity - for instance, the H100 provides 80 GiB (≈ 687 billion bits), but also the highest latency, ≈ 500 clock cycles. Every SM accesses global memory through the L2 cache, and it serves as the backing store for all tensors/matrices. It is used to implement the GMEM of the CUDA programming model (Which we will talk about later) and to store register data that spills from the register file in the local memory.

L2 Cache: Above global memory sits the L2 cache, a large on-chip cache (made out of SRAM) shared across all SMs. It serves as the main bridge between the compute cores and the slower off-chip HBM, caching recently accessed data to reduce latency. It is physically partitioned into two parts; each SM connects directly to one partition and indirectly to the other through the crossbar.

Distributed Shared Memory (DSMEM): This is new in the memory hierarchy. DSMEM allows multiple thread blocks to share data directly across SMs within the same GPC. It extends traditional shared memory beyond a single SM, enabling cooperation between up to 16 blocks in a thread block cluster. It offers lower latency than L2 but higher than per-SM shared memory and L1 obviously.

Shared Memory (SMEM) & L1 Cache: I put these together since they co-exist on the same physical storage on-chip. They are again made out of SRAM cells, making them very fast with much lower latency and higher bandwidth than the other memory types below in the pyramid. They both have a maximum total size of 256 KiB with 31 TB/s of memory bandwidth. The L1 data cache is accessed by the LD/ST units of the SM. These 256 KiB can be configurable, by trading off a larger shared memory for a smaller L1 cache or vice versa. There is, however, a maximum of up to 228 KiB to assign for the shared memory, as we still need to have enough memory reserved for the L1 cache.Matter of fact, those 228 KiB are not exact, as seen from the H100 architecture figure above. 1 KiB of SMEM goes for system use per block anyway, so effectively, we have

228 − num_blocks * 1 KiBleft as our maximum configurable size.Register Memory (RMEM): Finally, at the lowest level of the memory hierarchy and at the top of our pyramid are the registers, which store the values manipulated by a single thread While registers are private to each thread, there is one exception: a thread can read another thread’s register, but only for threads within the same warp. This is done using warp level shuffle primitives. You can often find these in reduction kernels, for example, as they allow extremely fast communication between threads. . They are extremely fast, with effective bandwidths on the order of 124 TB/s and a latency of roughly one clock cycle. If a thread’s register usage exceeds the available register file, the compiler will spill values into local memory, which resides in global memory and is therefore much slower. As with CPU programming, registers are not directly manipulated at the CUDA C/C++ level. They are only visible in PTX and ultimately allocated by ptxas during compilation (see Compilation Story below). One of the compiler’s goals is to keep register usage per thread low enough to allow more thread blocks to reside simultaneously on an SM, since high register pressure reduces occupancy.

Tensor Memory Accelerator (TMA): Introduced with the Hopper architecture, it enables asynchronous data transfers between global memory and shared memory, as well as between shared memories within a thread block cluster. It also automatically performs swizzling to prevent shared memory bank conflicts, abstracting away the complex data movement and layout patterns that developers previously had to manage manually.

📖 Compilation Story

The journey of a CUDA program from source code to final execution is governed by a multi-stage compilation process orchestrated by the NVCC compiler driver. The NVCC compiler driver orchestrates the process, splitting the program into Host Code (CPU) and Device Code (GPU).

The Device Code is first compiled into PTX (Parallel Thread Execution) pronounced as "pee-tee-ecks" (or at least I do :)). PTX is NVIDIA's Virtual ISA (Instruction Set Architecture), which provides an architecture-independent, intermediate representation (IR) of the code. The ptxas assembler then takes the PTX code, performs necessary optimisations, and translates it into the Native ISA known as SASS (Streaming ASSembler). This is the lowest-level format in which human-readable code can be written. The SASS code, along with some other metadata are packaged into a CUBIN (CUDA Binary), which is the executable container for a specific GPU architecture. Finally, NVCC bundles one or more CUBINs together with the original PTX into a Fat Binary, which is then embedded inside the final executable alongside the CPU binary code.

The inclusion of PTX is crucial for forward compatibility: if the Fat Binary runs on a future GPU lacking a matching CUBIN, the runtime performs Just-In-Time (JIT) compilation using the embedded PTX to generate the necessary SASS, ensuring execution. We will analyse the PTX and SASS in kernels 2 and 5 to see why they are useful.

Now that we’ve built a solid mental model, let’s wrap up this section with a complete visualisation of the H100 architecture, bringing together everything we’ve discussed into perspective.

Kernel 1: Naive

In the CUDA programming model, computation is organised in a two level hierarchy. Each invocation of a CUDA kernel creates a new grid, which consists of multiple blocks. Inside each block there can be up to 1024 threads in total, regardless of whether the threads are arranged in 1D, 2D, or 3D. That is, the product blockDim.x * blockDim.y * blockDim.z <= 1024. All threads in a grid execute the same kernel function, and they rely on thread indices to distinguish themselves and to identify the appropriate portion of the data to process In general, it is recommended that the number of threads in each dimension of a thread block be a multiple of 32 for hardware efficiency reasons. They align with another concept called warps, which we will introduce soon. For now, just remember that a warp is a group of 32 threads, so keeping dimensions consistent with that is beneficial.. Kernels are written from the perspective of a single thread, following the SIMT (Single Instruction, Multiple Threads) execution model. Hence, CUDA programming is an instance of the SPMD (Single Program, Multiple Data) paradigm.

When a thread inside a kernel calls a __device__ function, it executes the function itself. It only knows about the thread that called it. Basically, it is just a normal function like in C++, but happening inside one GPU thread — and you can imagine thousands of these function instances running in parallel.

__global__ functions are the kernels. They are compiled for GPU execution but launched from the CPU (host). A kernel launch creates a grid of blocks, and each thread inside those blocks starts executing the kernel code independently.

All thread blocks operate on different parts of the input, so they can be executed in any arbitrary order. Therefore, we should never make assumptions about the execution order of blocks, or about which thread within a block will execute first.

Connecting our new understanding of the CUDA programming model with our mental model of the underlying hardware, the next diagram visualises the POV of a single thread, showing where it sits within the kernel and hardware, how it interacts with different memory spaces, and how it fits into the overall grid structure. I intentionally left out the L1 and L2 caches since they are hardware managed and not directly controlled by us.

In this first kernel, we will assign each thread in a block (within the grid) to compute exactly one element of C. Each thread takes its own coordinates and loops through the corresponding row of A across the shared dimension N (I know most official resources use K for this, but I’ve already committed to N across all kernels, so I’ll stick with that for consistency). At the same time, the thread loops down the matching column of B, accumulating the products as it goes. Once the loop is done, the result is written back into C at the same coordinates.

Spoiler alert: doing this 1-1 mapping of thread result to output is not actually the most efficient (if you guessed that, you’re right). In later kernels, we will have a single thread compute multiple elements of the output, but let’s leave this for now.

Below is a simple visualisation of how this works, including an example from the perspective of a single thread.

Notice that even though the CUDA programming model supports 2D coordinates (x, y), we still launch the block as 1D. In Simon’s work (Kernel 2), he mentions that switching from a 2D launch to a 1D launch plus remapping helps achieve coalesced global memory accesses. The idea is to launch a 1D block and then reinterpret threadIdx.x as a 2D coordinate using % and /.

However, when I tested both approaches, the 1D launch with remapping and a regular 2D launch, I observed identical performance. This initially puzzled me because Simon reported a speedup in his version. The key difference is that Simon’s naive kernel accessed matrix A down a column, which is a non-coalesced pattern, whereas his coalesced kernel accessed A across a row by letting blockIdx.x and threadIdx.x correspond to the row, not the column. This is the opposite of what I do in my version. In my implementation, even the naive kernel already accesses A in a coalesced, row-major fashion, so both versions naturally achieve the same efficiency. Funnily enough, this was probably very basic but it did confuse me for a while because I initially thought the coalescing came from the remapping trick.

So really Simon’s speedup comes from fixing the memory access pattern, not from the 1D versus 2D block layout itself. Since my naive kernel already uses coalesced loads, the launch configuration does not make a difference. I will still use the 1D launch plus remapping approach here because it gives me a natural moment to talk about memory coalescing, and I will also show how his version leads to a non-coalesced pattern. Let's just define what's a warp.

Each SM groups threads in a thread block into warps, which are sets of 32 threads. A warp is the unit of scheduling that the warp scheduler can issue instruction for only one warp at a time, and all 32 threads in that warp execute the same instruction in lockstep. Blocks are linearised into a 1D array (row-major order), then split into consecutive groups of 32 threads: warp 0 runs threads 0–31, warp 1 runs 32–63, and so on.

The code for this kernel looks like this:

template <const uint BLOCK_SIZE>

__global__ void sgemm_coalesced(const float* __restrict__ A, const float* __restrict__ B, float* __restrict__ C,

int M, int N, int K, float alpha, float beta) {

// flattened IDs remapping

uint row = blockIdx.y * BLOCK_SIZE + (threadIdx.x / BLOCK_SIZE);

uint column = blockIdx.x * BLOCK_SIZE + (threadIdx.x % BLOCK_SIZE);

if (row < M && column < K) {

float cumulative_sum = 0.0f;

for (int n = 0; n < N; n++) {

cumulative_sum += A[row * N + n] * B[n * K + column];

}

C[row * K + column] = (alpha * cumulative_sum) + (beta * (C[row * K + column]));

}

}

The remapping I mentioned happens here. If we had launched the block as two dimensional, we would simply use threadIdx.x and threadIdx.y directly, rather than computing them through threadIdx.x % BLOCK_SIZE and threadIdx.x / BLOCK_SIZE respectively.

6 = 7 - 1. Dividing by the number of seats per row gives the row index: 6 / 6 = 1, which corresponds to row 2 in 1 based indexing. The remainder tells me the seat within that row: 6 % 6 = 0, which becomes seat 1 in 1 based indexing. So seat 7 is the first seat of the second row. Dividing by the number of seats per row tells us how many complete rows we skip, and the remainder gives the seat position within that row.

uint row = blockIdx.y * BLOCK_SIZE + (threadIdx.x / BLOCK_SIZE);

uint column = blockIdx.x * BLOCK_SIZE + (threadIdx.x % BLOCK_SIZE);

Each warp of 32 threads executes the global memory load in parallel, like this:

cumulative_sum += A[row * N + n] * B[n * K + column];

Memory instructions (whether global or shared) may need to be re-issued depending on how addresses are distributed across the warp. Since we haven’t introduced shared memory yet (programming wise), let’s stay focused on global memory.

When a warp executes a load, the hardware checks if the 32 threads are accessing consecutive memory locations. The best case is when every thread reads consecutive addresses — the hardware can then coalesce all 32 requests into a single transaction.

Global memory sits in device DRAM, and DRAM is accessed in chunks of 32, 64, or 128 bytes. Fewer transactions mean higher efficiency. I’ll describe this in terms of FP32 loads (4 bytes per thread). If each thread’s 4-byte load required its own 32-byte transaction, throughput would drop by a factor of 8.

For example:

- If thread 0 reads location , thread 1 reads , thread 2 reads , … up to thread 31 reading , all 32 loads can be coalesced into a single memory transaction ().

- If the access pattern is irregular, multiple transactions may be needed, wasting bandwidth and reducing throughput.

Let us analyse the global memory access pattern for this coalesced kernel vs what a non-coalesced kernel would look like:

Running this kernel we get a throughput of 4.2 TFLOP/s, which comes out to about 8.2% relative to the FP32 cuBLAS kernel performance. We are still very far from what the hardware can theoretically deliver, often referred to as the Speed of Light (SoL)

Speed of Light refers to the theoretical upper bound on compute throughput that a GPU can deliver based purely on physics and chip design. For tensor core workloads this ceiling is given by: perf = freq_clk_max * num_tc * flop_per_tc_per_clk (989 TFLOP/s for Peak BF16 Tensor Core, 66.9 TFLOP/s for peak FP32 on H100 SXM5).

Although this number is usually presented as a fixed value, in practice it is not constant at all. It moves with the actual clock frequency the GPU can sustain, which itself changes under power and thermal limits. As a GPU approaches its power cap, the voltage regulator lowers the voltage, the clock speed drops, and the effective SoL falls with it. This behaviour is known as power throttling.

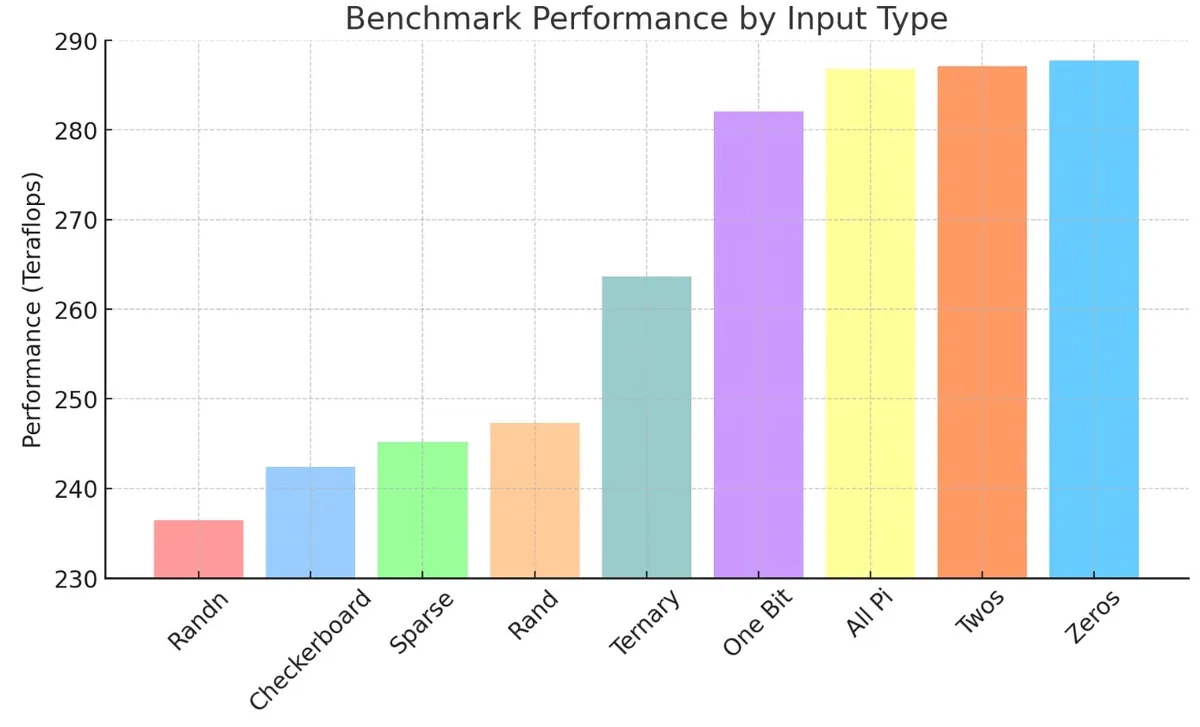

Horace He explored this beautifully using a simple matmul benchmark. A large matmul in PyTorch produced about 258 TFLOPs, while the same operation inside the CUTLASS profiler showed around 288 TFLOPs, a 10-11% improvement. It looked like a genuine kernel level speedup. But when the CUTLASS kernel was bound from Python and run with the same inputs, the gain disappeared. The only difference was that the CUTLASS profiler initialises tensors with integers, while PyTorch uses random numbers.

The reason this matters is rooted in how power is consumed on the chip. Static power keeps transistors on, while dynamic power is spent every time a transistor flips state. Random numbers cause chaotic bit flips across billions of transistors, which increases dynamic power and triggers throttling. Predictable values such as zeros or simple integer patterns flip far fewer bits, keep dynamic power low, and allow the GPU to hold higher clocks. In other words, kernels appear “faster” simply because the hardware is less electrically stressed, not because the code is more efficient.

This is why real kernels rarely reach the advertised peak TFLOP/s. The theoretical SoL assumes maximum clock frequency, but actual workloads constantly push into power and thermal constraints. The true ceiling shifts with voltage, clock speed, temperature, and even the randomness of your input data.

. This is expected because we are still early on, and one of the most obvious bottlenecks is the fact that every iteration forces us to reach out to global memory. As mentioned earlier, accessing GMEM costs roughly 500 cycles, while accessing shared memory (SMEM) costs around 20 to 30 cycles. In the next kernel, we will improve performance by letting threads cooperatively load values from GMEM into SMEM before performing any computation. Once the tile is in SMEM, threads can fetch their operands from there instead of repeatedly going to GMEM. This speeds things up considerably and helps us move closer to higher throughput.

. This is expected because we are still early on, and one of the most obvious bottlenecks is the fact that every iteration forces us to reach out to global memory. As mentioned earlier, accessing GMEM costs roughly 500 cycles, while accessing shared memory (SMEM) costs around 20 to 30 cycles. In the next kernel, we will improve performance by letting threads cooperatively load values from GMEM into SMEM before performing any computation. Once the tile is in SMEM, threads can fetch their operands from there instead of repeatedly going to GMEM. This speeds things up considerably and helps us move closer to higher throughput.

Kernel 2: Shared Memory Tiling

The logic behind this kernel will be as follows. We will allocate shared memory spaces for loading tiles from A and B, called sharedA and sharedB respectively. The number of elements in each tile will be TILE_SIZE * TILE_SIZE, which matches the number of threads in each block when we launch the grid with dim3 blockDim(32 * 32). This means that, just like before, each thread is responsible for computing a single element of the output. In addition to that, each thread will load two values per tile iteration, one from A and one from B, into shared memory.

I am pointing this out because later we will have kernels where each thread loads more than one element, which requires extra indexing logic to determine where that thread should write inside shared memory. In this kernel we do not need that yet, since each thread loads exactly one element from each matrix, so knowing ty and tx is enough.

This is easier to see visually than in words, so I start with a small example using 4 by 4 matrices A and B to illustrate the idea. After that I will show how the same logic looks inside the actual kernel.

Putting the logic together and applying it to our actual launch configuration, the kernel now looks like this.

The full code looks like this:

template <const uint TILE_SIZE>

__global__ void sgemm_tiled_shared(const float* __restrict__ A, const float* __restrict__ B, float* __restrict__ C,

int M, int N, int K, float alpha, float beta) {

// Allocate shared memory

__shared__ float sharedA[TILE_SIZE * TILE_SIZE];

__shared__ float sharedB[TILE_SIZE * TILE_SIZE];

// Identify the tile of C this thread block is responsible for (We assume tiles are same size as block)

const uint block_row = blockIdx.y;

const uint block_column = blockIdx.x;

// Calculate position of thread within tile (Remapping from 1-D to 2-D)

const uint ty = threadIdx.x / TILE_SIZE; // (0, TILE_SIZE-1)

const uint tx = threadIdx.x % TILE_SIZE; // (0, TILE_SIZE-1)

// Move pointers from A[0], B[0] and C[0] to the starting positions of the tile

A += block_row * TILE_SIZE * N; // Move pointer (block_row * TILE_SIZE) rows down

B += block_column * TILE_SIZE; // Move pointer (block_column * TILE_SIZE) columns to the right

C += (block_row * TILE_SIZE * K) + (block_column * TILE_SIZE); // Move pointer (block_row * TILE_SIZE * K) rows down then (block_column * TILE_SIZE) columns to the right

// Calculate how many tiles we have

const uint num_tiles = CEIL_DIV(N, TILE_SIZE);

float cumulative_sum = 0.0f;

// Iterate over tiles (Phase 1: Loading data)

for (int t = 0; t < num_tiles; t++) {

sharedA[ty * TILE_SIZE + tx] = A[ty * N + tx];

sharedB[ty * TILE_SIZE + tx] = B[ty * K + tx];

__syncthreads();

// Phase 2: Compute partial results iteratively

for (int i = 0; i < TILE_SIZE; i++) {

cumulative_sum += sharedA[ty * TILE_SIZE + i] * sharedB[i * TILE_SIZE + tx];

}

__syncthreads();

// Move all pointers to the starting positions of the next tile

A += TILE_SIZE; // Move right

B += TILE_SIZE * K; // Move down

}

// Write results back to C

C[ty * K + tx] = (alpha * cumulative_sum) + (beta * C[ty * K + tx]);

}

While this kernel achieves approximately a 1.7× throughput improvement over the previous kernel and now reaches 13.9% relative to cuBLAS (FP32), profiling it with Nvidia’s Nsight Compute reveals a few key problems.

First, if we look at the Speed of Light section in the profiler we notice something interesting. We get 76.63% Compute throughput and a Memory throughput of 91.13%. These are percentages relative to the SoL of the hardware on FP32. This sounds confusing, as at first 76.63% compute throughput may seem very decent, but this is a relatively basic GEMM kernel so it cannot make sense.

Looking at the throughput breakdown, which shows the bottlenecks in percentage terms, we instantly understand the misconception. SM: Inst Executed Pipe Lsu = 76.63% (the same number as shown in the overview because the overview simply displays the highest percentage across the instruction breakdown). Pipe LSU is the load store unit. As the profiler describes, its role is

"The LSU pipeline issues load, store, atomic, and reduction instructions to the L1TEX unit for global, local, and shared memory. It also issues special register reads (S2R), shuffles, and CTA level arrive or wait barrier instructions to the L1TEX unit."

The next highest issued instructions are SM: Mio Inst Issued at 40.08% which is the memory input or output unit. This means instruction issue is split almost half between LSU operations and non memory operations, again pointing to the memory side being the dominant force.

What about the number of instructions doing FP32 computation? SM: Pipe FMA Cycles Active = 14.81%. This is the real number we care about because it shows we are nowhere near utilising our FP32 hardware capability at all, which is exactly what we should expect this early on. This metric answers the question of on what fraction of active SM cycles the FP32 FMA execution pipe was actually doing work. For a good GEMM, we would love to see this number very high (think 60 to 80%+) because GEMM is almost all FMAs. Spoiler alert: we will see in kernel six how we increase this number to 62%.

The overview compute throughput number (the one selected as the maximum) was therefore a bit misleading because the overall value considers all instructions executed on the SM (ALU, FMA, SFU, LSU and so on). There are other useful metrics as well, but we will ignore them for now. I have all the profiling reports in downloadable .ncu-rep format in the GitHub repository if you want to open them in Nsight Compute and inspect them in detail. For now let us turn our attention to the memory throughput breakdown.

Our top three metrics are L1: Data Pipe Lsu Wavefronts = 91.13%, L1: Lsu Writeback Active = 87.07% and L1: Lsuin Requests = 76.63%. At this point it is obvious we are simply overwhelming shared memory with the sheer amount of loads and stores we request to and from it.

There are still interesting metrics and angles to look at this from the profiler so the below figure will show three different views from the profiler annotated. For the SASS code, you can view the full SASS code of this kernel either from the profiler itself in the source section or from this GoodBolt link.

As I point out in the roofline plot

Therefore, in our next kernel, instead of letting each thread compute the result of just one element in the output matrix, we will let it compute multiple elements. Each thread will partially accumulate several results in its own registers and only once the computation is complete will it store the final values back from registers into C.

Kernel 3: 1D Register Tiling

Write up in progress. All code for kernels are available on GitHub.

Kernel 4: 2D Register Tiling

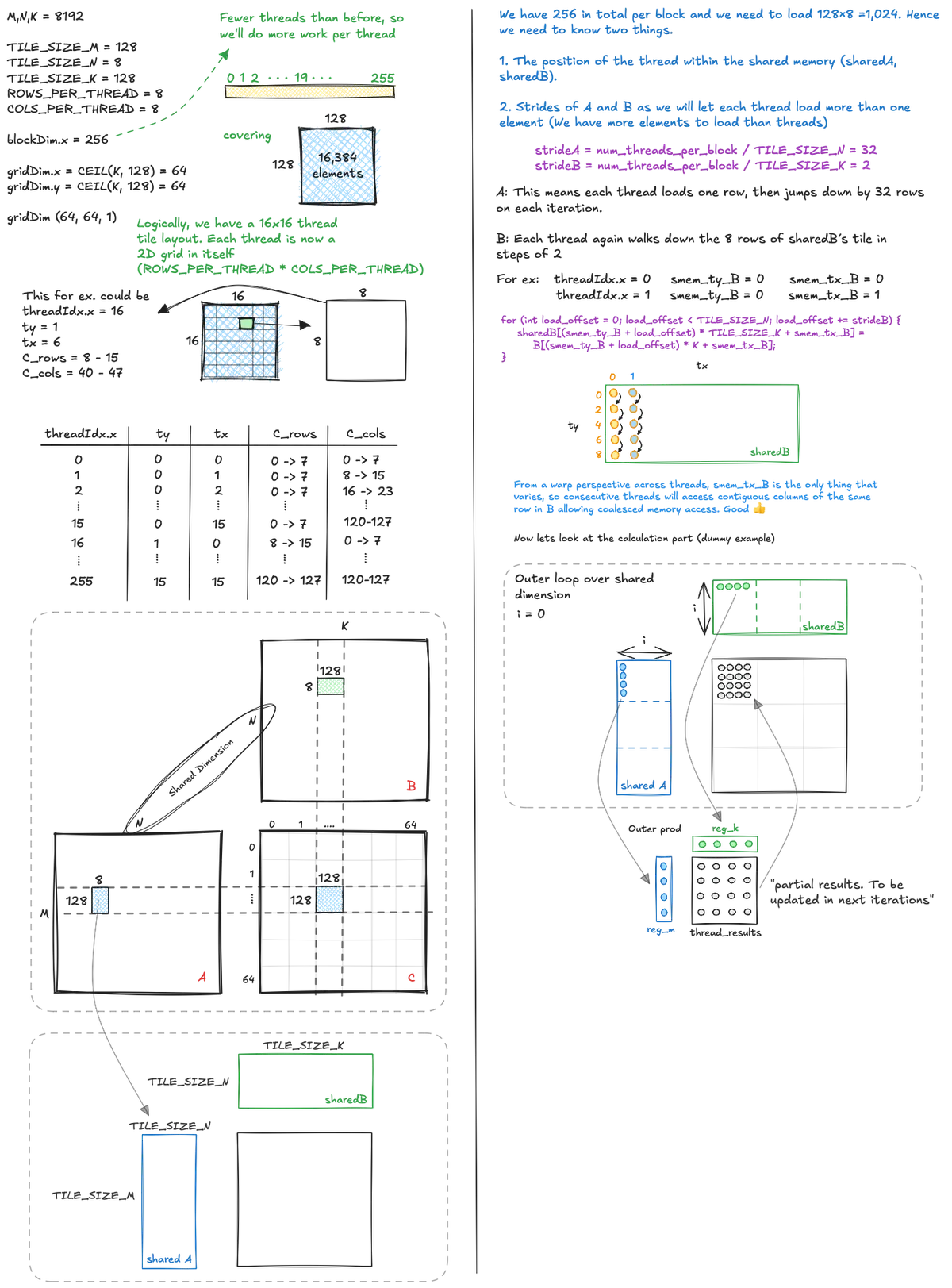

To squeeze in a little more arithmetic intensity in our kernel we now let each thread compute more than a single output element. As illustrated below, the idea is that a thread block covers a tile of the output matrix and inside that tile each thread is responsible for its own small 2D patch, computing ROWS_PER_THREAD * COLS_PER_THREAD results.

Since we now launch fewer threads than the number of elements in the shared memory tiles, each thread must load multiple elements from global memory into shared memory, using a stride so that all elements of the tiles are covered without overlap. Once the shared memory tiles for A and B are fully populated, each thread repeatedly loads the pieces of A and B it needs from shared memory into registers, performs partial outer product updates, and accumulates into its local registers until its final ROWS_PER_THREAD * COLS_PER_THREAD block of C is complete.

The code for this kernel looks like this:

template <const uint TILE_SIZE_M, const uint TILE_SIZE_N, const uint TILE_SIZE_K, const uint ROWS_PER_THREAD>

__global__ void sgemm_1D_registertiling(const float* __restrict__ A, const float* __restrict__ B, float* __restrict__ C,

int M, int N, int K, float alpha, float beta) {

// Allocate shared memory

__shared__ float sharedA[TILE_SIZE_M * TILE_SIZE_N];

__shared__ float sharedB[TILE_SIZE_N * TILE_SIZE_K];

// Identify the tile of C this thread block is responsible for

const uint block_row = blockIdx.y;

const uint block_column = blockIdx.x;

// Calculate position of thread within tile (Remapping from 1-D to 2-D)

const uint ty = threadIdx.x / TILE_SIZE_K;

const uint tx = threadIdx.x % TILE_SIZE_K;

// Move pointers from A[0], B[0] and C[0] to the starting positions of the tile

A += block_row * TILE_SIZE_M * N;

B += block_column * TILE_SIZE_K;

C += (block_row * TILE_SIZE_M * K) + (block_column * TILE_SIZE_K);

// Calculate position of thread within shared memory tile

const uint smem_ty_A = threadIdx.x / TILE_SIZE_N;

const uint smem_tx_A = threadIdx.x % TILE_SIZE_N;

const uint smem_ty_B = threadIdx.x / TILE_SIZE_K;

const uint smem_tx_B = threadIdx.x % TILE_SIZE_K;

// Calculate number of tiles

const uint num_tiles = CEIL_DIV(N, TILE_SIZE_N);

// Initialise thread-local results in registers

float thread_results[ROWS_PER_THREAD] = {0.0f};

// Iterate over tiles

for (int t = 0; t < num_tiles; t++) {

sharedA[smem_ty_A * TILE_SIZE_N + smem_tx_A] =

A[smem_ty_A * N + smem_tx_A];

sharedB[smem_ty_B * TILE_SIZE_K + smem_tx_B] =

B[smem_ty_B * K + smem_tx_B];

__syncthreads();

// Inner computation loop

for (int i = 0; i < TILE_SIZE_N; i++) {

float fixed_B = sharedB[i * TILE_SIZE_K + tx];

for (int row = 0; row < ROWS_PER_THREAD; row++) {

uint global_row_idx = ty * ROWS_PER_THREAD + row;

thread_results[row] +=

sharedA[global_row_idx * TILE_SIZE_N + i] *

fixed_B;

}

}

__syncthreads();

// Move to next tile

A += TILE_SIZE_N;

B += TILE_SIZE_N * K;

}

// Write results back to C

for (int row = 0; row < ROWS_PER_THREAD; row++) {

uint global_row_idx = ty * ROWS_PER_THREAD + row;

C[global_row_idx * K + tx] =

(alpha * thread_results[row]) +

(beta * C[global_row_idx * K + tx]);

}

}

Last kernel reduced memory IO stalls, but we were still doing too many shared memory reads per thread per output:

- 9108 SMEM reads per output

- 254 GMEM reads per output

In this kernel, each thread now computes a tile of rows and columns, not just a vertical strip. That brought it down to:

- 2024 SMEM reads per output

- 128 GMEM reads per output

That is a 4.5x reduction in SMEM load traffic per output and 2× in GMEM, while computing 8× more results per thread.

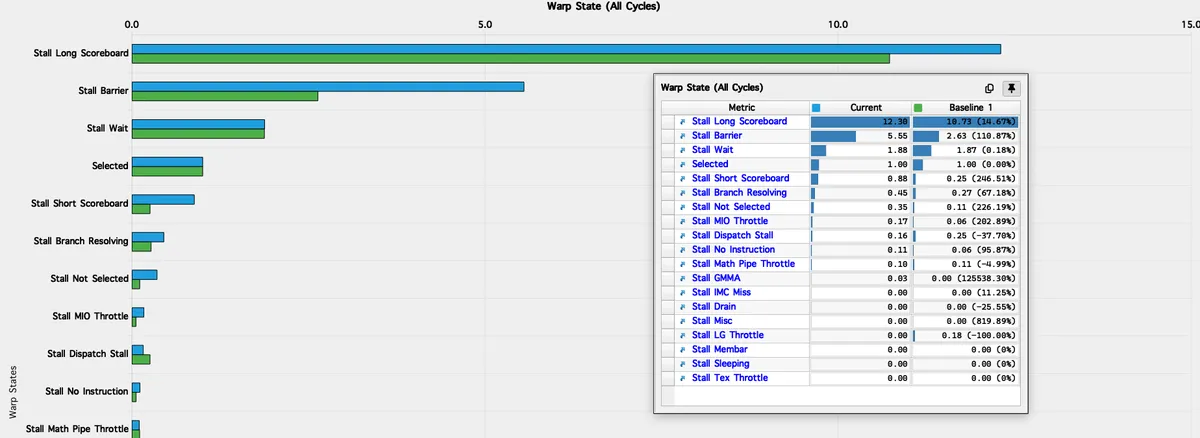

Profiling this kernel shows that it reaches 38% of the H100’s FP32 peak, which immediately tells us we are not compute-bound. Global memory is also nowhere near saturated: the kernel uses only 2.90% of DRAM bandwidth and about 10.13% of L2, both extremely low for a GEMM workload. So neither global bandwidth nor L2 traffic is limiting anything.

The first meaningful signal appears in the Speed of Light section:

- Compute Throughput: 55.50%

- Memory Throughput: 85.88%

- L1/TEX Throughput: 87.74%

The profiler even points us directly toward the bottleneck:

“The kernel is utilising greater than 80% of the available compute or memory performance. To further improve performance, work will likely need to be shifted from the most utilised unit to another. Start by analysing L1 in the Memory Workload Analysis section.”

Since DRAM and L2 are barely touched while L1/TEX is approaching 90% utilisation, the pressure is clearly concentrated in the on-chip memory hierarchy. In other words, this is not DRAM problem at all. The limiting factor is the bandwidth and latency of the L1 cache/SMEM path.

This picture is reinforced by the scheduler metrics. SM Issue Active is 55.50%, meaning the warp scheduler issues instructions for just over half of all cycles. The remaining ~45% of cycles are spent stalled, typically waiting on data movement through L1/SMEM rather than through DRAM or L2.

Looking at the scheduler statistics we find:

"Every scheduler is capable of issuing one instruction per cycle, but for this kernel each scheduler only issues an instruction every 1.8 cycles. This might leave hardware resources underutilized and may lead to less optimal performance."

The Stall MIO throttle is also on 0.59, so we want to reduce this. Taken together: extremely low DRAM usage, extremely low L2 usage, high L1/TEX utilisation (∼88%), and only mid-50% SM issue all converge to the same conclusion. In this

This kernel is L1-bound or SMEM-bound. It is not compute-bound, and it is certainly not GMEM-bound. The bottleneck is the on-chip data movement between registers, shared memory, and the L1/TEX path. Our next optimisation will require reducing the instruction overhead within the L1/SMEM pipeline, directly addressing the 1.8 cycles per instruction rate and freeing the warp scheduler to increase SM Issue Active.

Despite the limitations, we still land a 1.40× gain over the previous kernel: 19.1 TFLOPs versus 12.2 TFLOPs, pushing us up to 36.8% of cuBLAS.

Kernel 5: Vectorised 2D Register Tiling

Up until now, in all our previous kernels we issued one load instruction per scalar. It is slightly confusing because the coalescing we optimised for might suggest that we are not really doing one load per scalar. The missing detail is that there is an important distinction between:

- memory transactions

- the number of instructions issued

Coalescing only helps with the first point. It allows the hardware to merge the data requested by different threads into a single contiguous memory transaction. It does not change the second point at all. Every scalar load still appears as a separate instruction, and each one must be issued by the warp scheduler and pushed through the load and store pipeline.

To confirm this, inspecting the SASS of the previous kernel, specifically the parts where we load from GMEM to SMEM, we do in fact find that every loop iteration generates a separate instruction issue per thread. While we know the accesses to B for example are coalesced, which achieves its purpose at the memory controller level, the hardware still requires four separate instruction issues per threadCoalescing does not happen at compile time. The hardware performs it dynamically at runtime once the actual addresses are known. This is sensible, since the compiler cannot assume alignment or layout since our matrix pointers are passed in as function arguments.. Now, extrapolate this across the whole warp: every time the kernel cycles through this section, the warp scheduler must issue a total of 32 threads * 4 loads/thread = 128 separate load instructions. Even though the memory controller may merge all those 128 scalar requests into a handful of large, efficient memory transactions, the pipeline bottleneck remains at the frontend. The warp scheduler is overworked, and the load/store pipeline is saturated with repetitive requests, starving the actual compute units of valuable clock cycles.

The only way to resolve the instruction pressure is to fundamentally change how the compiler views the data transfer. We must tell the compiler that when it sees a single load request, it should fetch multiple scalars at once. CUDA offers vectorised variables that allow us to reduce this instruction pressure. These refer to wider data types such as float2 or float4. A regular float is 32 bits (4 bytes). A float4 is 128 bits (16 bytes). By casting a float* to a float4* and issuing a single load, we trigger one 128-bit global memory instruction. In SASS this appears as LDG.E.128 and sometimes LDG.E.CI.128. This reduces the load instruction count dramatically and makes more efficient use of the hardware memory paths.

Let’s look at the next kernel then. The whole structure remains the same, the only difference is that we will transpose sharedA. So loading a row from GMEM will be stored in sharedA as a column. We do this to allow us to use vectorised loads, since issuing a vectorised load instruction requires the addresses to be physically contiguous.

The logic centres on the access pattern for Matrix A. Since each thread needs to load the ROWS_PER_THREAD elements required for its calculation (which correspond to traversing a column), the default memory accesses are strided. If we transpose the column data, it will be physically stored as a contiguous row in sharedA. This allows us to issue float4 loads later when moving data from the SMEM to reg_m.

The same approach applies for sharedB, but we won't be transposing it. As we recall from the last kernel, each thread loads COLS_PER_THREAD into reg_k, and these elements are already next to each other on the same row, so we don't need to transpose.

What doing this leads to is the complete abandonment of the scalar + offset approach, and so we won't need to loop with strides to load from GMEM to SMEM. This also means that when we load into registers, since we can issue float4 and both ROWS_PER_THREAD and COLS_PER_THREAD are 8, we will still load into registers in a loop but will now stride in steps of 4. This all might sound confusing, so as usual, I will draw it visually.

Now let’s inspect the SASS for this kernel, compare it with the previous version, and then look at what the profiler tells us.

We can see that the number instructions issued per thread dropped from 8 to just 2 using our approach. The visual above shows only the GMEM loading phase with LDG.E.CI.128, but similarly we cut down the number of instructions when reading from SMEM to RMEM (Register Memory). While I won't put it here because it's much longer, we can clearly still see the LDS.U.128 instruction in the SASS, confirming the success of vectorising the shared memory reads as well. You can still see the full SASS/PTX for this kernel if you are interested to look into it more.

Running this kernel we get another ≈2x speedup and now at 72% of cuBLAS performance with 37.2 TFLOP/s. Great, we are getting there (FP32 path only ofc yet! still a long way to beat cuBLAS with tensor cores)

The profiler shows the kernel is now making reasonably good use of our GPU, compute throughput sits around 66% of peak, memory throughput around 85%, and we’re hitting 56% of the device FP32 roofline.

Bare in mind cuBLAS sits at around 85%, and no practical workload would actually reach 100% of the hardware theoretical peak.

Now let's compare the issues we found before with our new profiler results for this kernel:

SM Issue Active: Increased from 55.50% to 66.05% (More time spent on compute. The scheduler is ∼19% busier).SM Pipe Fma Cycles Active: Increased from 42.00% to 56.73% (More computations are being done).SM Inst Executed Pipe Lsu(Load Store Unit): Decreased from 28.78% to 17.09% (Evidence of the instruction count drop).SM Mio Inst Issued: Decreased from 14.99% to 9.21%.Stall MIO Throttle: Decreased from 0.59 to 0.02.

Furthermore, the warning regarding the scheduler issuing only 1.8 cycles per instruction is now gone. Massive improvements, but there are still improvements to be made.

Looking carefully, there are some key metrics subtleties that are hurting our current performance and holding us back from achieving higher compute throughput.

Most critically, shared memory accesses show heavy bank conflicts: about 5-way conflicts on loads and 2.6-way conflicts on stores, with over 40% of all shared memory wavefronts wasted in serialisation.

In Nsight Compute, a wavefront refers to the hardware chunk of shared memory requests that can be handled in a single cycle. When bank conflicts occur, the request must split into multiple wavefronts, each processed one after the other, causing stalls.

We haven’t really considered bank conflicts yet in our kernels, so this is a suitable time to introduce the concept.

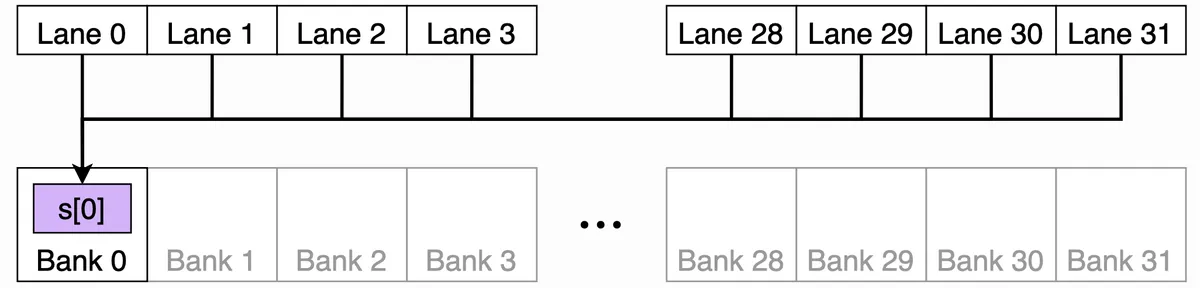

I tried to draw the shared memory organisation to visualise this better, but the key concept to grasp is that shared memory on NVIDIA GPUs (including H100) is divided into 32 banks, each of which can serve one 4-byte word per cycle. I like to think of it like thirty-two checkout lanes at a supermarket, with each lane handling one customer per cycle. A “word” here just means the basic unit of storage: 4 bytes (so a single float for ex).

The bank index can then be simply calculated using the common modulo trick:

bank_index = word_index % 32

- Bank 0: words 0, 32, 64, …

- Bank 1: words 1, 33, 65, …

- …

- Bank 31: words 31, 63, 95, …

Now, when a warp of 32 threads issues a shared memory access:

- If each thread touches a different bank, no conflict, everyone gets served in parallel. Good!

- If multiple threads try to read/write different addresses in the same bank, those requests are serialised, one after another. That’s a bank conflict.

- If all threads read the exact same word, the hardware does a broadcast instead, which is efficient. Also good!

In this diagram, all 32 lanes can read from the same banked word.

The hardware broadcasts the value efficiently instead of causing a conflict.

In this diagram, all 32 lanes can read from the same banked word.

The hardware broadcasts the value efficiently instead of causing a conflict.

Let us start with the store conflicts. In our code the ≈ 2.6 conflicts on stores show up when we populate sharedA with the transposed tile.

// Populate smem using vector loads

float4 tempA = reinterpret_cast<const float4*>(&A[smem_ty_A * N + smem_tx_A*4])[0]; // [0] dereference issues one ld.global.nc.v4.f32

// Transpose A (instead of 128x8 previously for ex, now it will be 8x128)

sharedA[(smem_tx_A * 4 + 0) * TILE_SIZE_M + smem_ty_A] = tempA.x;

sharedA[(smem_tx_A * 4 + 1) * TILE_SIZE_M + smem_ty_A] = tempA.y;

sharedA[(smem_tx_A * 4 + 2) * TILE_SIZE_M + smem_ty_A] = tempA.z;

sharedA[(smem_tx_A * 4 + 3) * TILE_SIZE_M + smem_ty_A] = tempA.w;

smem_ty_A ranges across the columns in our transposed sharedA and smem_tx_A is either 0 or 1 (in this kernel configuration ofc). The word index for each scalar store is:

word_index = (smem_tx_A*4 + q) * TILE_SIZE_M + smem_ty_A→ q in {0,1,2,3}bank = word_index % 32

With TILE_SIZE_M = 128, the leading stride is divisible by 32 banks. Because 128 % 32 = 0, the bank depends only on the offset that survives the calculation, not on the stride factor.

The bank effectively depends only on the smem_ty_A value (the column offset) and not on the smem_tx_A value (the row index). Since each two threads share the same smem_ty_A value, they all target the same bank for each of the four scalar stores. This is exactly the pattern the profiler called out as two-way store conflicts.

One common trick to avoid this class of conflict when the leading stride is a multiple of 32 words is padding.

// Allocate shared memory. Use padded leading strides that keep float4 alignment

constexpr uint STRIDE_A = (TILE_SIZE_M % 32u == 0u) ? (TILE_SIZE_M + 4u) : TILE_SIZE_M;

constexpr uint STRIDE_B = (TILE_SIZE_K % 32u == 0u) ? (TILE_SIZE_K + 4u) : TILE_SIZE_K;

static_assert((STRIDE_A % 4u) == 0u, "STRIDE_A must keep float4 alignment");

static_assert((STRIDE_B % 4u) == 0u, "STRIDE_B must keep float4 alignment");

If we pad the leading stride to 132 words and use that padded stride everywhere we touch sharedA (both when writing the transpose and when reading it later), the index driving the row smem_tx_A now influences the bank. The two lanes that used to collide are split onto sixteen banks apart, and the four scalar stores for x, y, z, w rotate across banks rather than piling onto one. To prove this, I profiled the kernel after padding and the results showed store conflicts were eliminated.

Now we still have the actually bigger conflict which is the five-way bank conflict for the loads. The conflict mainly happens when loading from sharedB, particularly at:

for (int col = 0; col < COLS_PER_THREAD; col += 4) {

uint global_smem_col_idx = tx * COLS_PER_THREAD + col;

float4 temp_shared_B =

reinterpret_cast<float4*>(&sharedB[i * TILE_SIZE_K + global_smem_col_idx])[0];

reg_k[col + 0] = temp_shared_B.x;

reg_k[col + 1] = temp_shared_B.y;

reg_k[col + 2] = temp_shared_B.z;

reg_k[col + 3] = temp_shared_B.w;

}

For lanes 0..15, ty is still 0 but tx walks 0..15. If we freeze col = 0 to make it simple, the bank for the first word of each lane’s float4 will be:

bank = (i* 128 + 8 * tx) % 32 = (8 * tx) % 32- = 0, 8, 16, 24, 0, 8, 16, 24, ... only four banks used by a half warp

Now remember we are doing vectorised float4 loads so it spans four consecutive banks for that lane. So lane with bank start 0 touches banks {0,1,2,3}, lane with 8 touches {8,9,10,11}, lane with 16 touches {16,17,18,19}, lane with 24 touches {24,25,26,27}, and so on.

Since the pattern repeats every four lanes, that means we have four lanes that all want banks {0..3} at the same time, four lanes that all want {8..11}, etc. This is where we get a four-way conflict for this instruction.

For sharedA loads it’s different. What varies across lanes inside a half warp is tx, but tx does not appear in the address. Inside one half warp ty is constant. For fixed i and row, every lane computes the same address. So all sixteen lanes in the half warp read the same four words of sharedA at that step. As I said earlier this can be broadcasted, so it’s conflict-free in terms of load.

The important bit here is that padding does not fix this load conflict. Padding helps when the varying part of the address is multiplied by a stride that is a multiple of thirty-two words. In the sharedB load above, the varying part is tx * COLS_PER_THREAD + col, and that bit is not multiplied by the padded stride. So even if we set STRIDE_B = 132, the lanes inside a half warp still bunch onto the same four bank groups. So, padding solved the store side, but the sharedB load conflicts need a different approach.

The final code for our padded vectorised 2D register tiling kernel looks like this:

template <const uint TILE_SIZE_M, const uint TILE_SIZE_N, const uint TILE_SIZE_K, const uint ROWS_PER_THREAD, const uint COLS_PER_THREAD>

__global__ void sgemm_vectorised(const float *__restrict__ A, const float *__restrict__ B, float *__restrict__ C,

int M, int N, int K, float alpha, float beta)

{

// Allocate shared memory. Use padded leading strides that keep float4 alignment

constexpr uint STRIDE_A = (TILE_SIZE_M % 32u == 0u) ? (TILE_SIZE_M + 4u) : TILE_SIZE_M;

constexpr uint STRIDE_B = (TILE_SIZE_K % 32u == 0u) ? (TILE_SIZE_K + 4u) : TILE_SIZE_K;

static_assert((STRIDE_A % 4u) == 0u, "STRIDE_A must keep float4 alignment");

static_assert((STRIDE_B % 4u) == 0u, "STRIDE_B must keep float4 alignment");

// Allocate shared memory

__shared__ float sharedA[STRIDE_A * TILE_SIZE_N];

__shared__ float sharedB[TILE_SIZE_N * STRIDE_B];

// Identify the tile of C this thread block is responsible for

const uint block_row = blockIdx.y;

const uint block_column = blockIdx.x;

// Calculate position of thread within tile (Remapping from 1-D to 2-D)

const uint ty = threadIdx.x / (TILE_SIZE_K / COLS_PER_THREAD);

const uint tx = threadIdx.x % (TILE_SIZE_K / COLS_PER_THREAD);

// Move pointers from A, B, C to tile starts

A += block_row * TILE_SIZE_M * N;

B += block_column * TILE_SIZE_K;

C += (block_row * TILE_SIZE_M * K) + (block_column * TILE_SIZE_K);

// Map each thread to one 4-float chunk

const uint smem_ty_A = threadIdx.x / (TILE_SIZE_N / 4);

const uint smem_tx_A = threadIdx.x % (TILE_SIZE_N / 4);

const uint smem_ty_B = threadIdx.x / (TILE_SIZE_K / 4);

const uint smem_tx_B = threadIdx.x % (TILE_SIZE_K / 4);

// Tile count

const uint num_tiles = CEIL_DIV(N, TILE_SIZE_N);

float thread_results[ROWS_PER_THREAD * COLS_PER_THREAD] = {0.0f};

float reg_m[ROWS_PER_THREAD] = {0.0f};

float reg_k[COLS_PER_THREAD] = {0.0f};

// Outer loop iterate over tiles

for (int t = 0; t < num_tiles; t++)

{

// Populate smem using vector loads

float4 tempA = reinterpret_cast<const float4 *>(&A[smem_ty_A * N + smem_tx_A * 4])[0];

sharedA[(smem_tx_A * 4 + 0) * STRIDE_A + smem_ty_A] = tempA.x;

sharedA[(smem_tx_A * 4 + 1) * STRIDE_A + smem_ty_A] = tempA.y;

sharedA[(smem_tx_A * 4 + 2) * STRIDE_A + smem_ty_A] = tempA.z;

sharedA[(smem_tx_A * 4 + 3) * STRIDE_A + smem_ty_A] = tempA.w;

float4 tempB = reinterpret_cast<const float4 *>(&B[smem_ty_B * K + smem_tx_B * 4])[0];

reinterpret_cast<float4 *>(&sharedB[smem_ty_B * STRIDE_B + smem_tx_B * 4])[0] = tempB;

__syncthreads();

// Outer loop over shared dimension N

for (int i = 0; i < TILE_SIZE_N; i++)

{

// Load regs from sharedA

for (int row = 0; row < ROWS_PER_THREAD; row += 4)

{

uint global_smem_row_idx = ty * ROWS_PER_THREAD + row;

float4 temp_shared_A = reinterpret_cast<float4 *>(&sharedA[i * STRIDE_A + global_smem_row_idx])[0];

reg_m[row + 0] = temp_shared_A.x;

reg_m[row + 1] = temp_shared_A.y;

reg_m[row + 2] = temp_shared_A.z;

reg_m[row + 3] = temp_shared_A.w;

}

// Load regs from sharedB

for (int col = 0; col < COLS_PER_THREAD; col += 4)

{

uint global_smem_col_idx = tx * COLS_PER_THREAD + col;

float4 temp_shared_B = reinterpret_cast<float4 *>(&sharedB[i * STRIDE_B + global_smem_col_idx])[0];

reg_k[col + 0] = temp_shared_B.x;

reg_k[col + 1] = temp_shared_B.y;

reg_k[col + 2] = temp_shared_B.z;

reg_k[col + 3] = temp_shared_B.w;

}

// Outer product

for (uint m = 0; m < ROWS_PER_THREAD; m++)

for (uint k = 0; k < COLS_PER_THREAD; k++)

thread_results[m * COLS_PER_THREAD + k] += reg_m[m] * reg_k[k];

}

__syncthreads();

A += TILE_SIZE_N;

B += TILE_SIZE_N * K;

}

// Write results back

for (uint row = 0; row < ROWS_PER_THREAD; row++)

for (uint col = 0; col < COLS_PER_THREAD; col += 4)

{

uint global_row_idx = ty * ROWS_PER_THREAD + row;

uint global_col_idx = tx * COLS_PER_THREAD + col;

float4 tempC = reinterpret_cast<float4 *>(&C[global_row_idx * K + global_col_idx])[0];

tempC.x = (alpha * thread_results[row * COLS_PER_THREAD + col]) + (beta * tempC.x);

tempC.y = (alpha * thread_results[row * COLS_PER_THREAD + col + 1]) + (beta * tempC.y);

tempC.z = (alpha * thread_results[row * COLS_PER_THREAD + col + 2]) + (beta * tempC.z);

tempC.w = (alpha * thread_results[row * COLS_PER_THREAD + col + 3]) + (beta * tempC.w);

reinterpret_cast<float4 *>(&C[global_row_idx * K + global_col_idx])[0] = tempC;

}

}

Kernel 6: Warp Tiling

So far we exploited two levels of parallelism.

- Block tiling: Each thread block computed a large tile of the output matrix C, reusing tiles of A and B from shared memory.

- Register tiling: Each thread computed a small sub-tile of C

(ROWS_PER_THREAD × COLS_PER_THREAD)entirely in registers, maximising data reuse before writing results back to global memory.

For this kernel, we will introduce a new level of tiling between block tiling and thread tiling and that is warp tiling.

Warp tiling sits between block tiling and thread tiling in the optimisation hierarchy. Instead of having all threads in a block cooperatively work on one large tile, we partition that tile into smaller sub-tiles, each assigned to a warp. This turns the warp into the middle-level unit of computation. The block still covers a 128 × 128 patch of C, but we split it into four 64 × 64 sub-tiles. Two warps along M by two warps along K which give us four warps per block.

TILE_SIZE_M = 128

TILE_SIZE_N = 16

TILE_SIZE_K = 128

WARP_TILE_M = 64

WARP_TILE_K = 64

WARP_STEPS_K = 4

ROWS_PER_THREAD = 8

COLS_PER_THREAD = 4

NUM_THREADS = 128 // four warps per block

The block still works cooperatively to load data from GMEM into SMEM (using the vectorised, padded, and transposed techniques from the previous kernel). Once the data is on-chip, the threads split into four warps, with each warp taking exclusive ownership of one quadrant of the output matrix, identified by warp_row and warp_col.

The 32 threads within a warp utilise their specific sub-indices (ty, tx derived from the 8×4 thread sub-grid) to attack their assigned 64×64 region. The warp covers the vertical dimension in one pass WARP_STEPS_M = 1 but must iterate horizontally WARP_STEPS_K=4 times (These are configurable ofc but need to be done carefully!). The partial results accumulation occurs within the loop that iterates over the TILE_SIZE_N shared dimension (i loop). In the compute loop, this design allows the thread to load the reg_m fragment from sharedA once and reuse it across the four horizontal steps, loading fresh reg_k data from sharedB for each step before summing the results of the outer product (between reg_m and reg_k) into the large thread_results array, accumulating the final result across all i iterations.

I will start by showing the high-level structure of the kernel, illustrating how warp tiling integrates as our new level of hierarchy. Following that, we will take the POV of a single thread using some dummy parameters, to visualise this thread's entire lifetime: where its computation happens, and exactly where it performs its loads and stores.

Now, we visualise the flow of computation from a single thread's perspective.

This extra level of tiling provides several benefits:

Alignment with hardware scheduling:

The warp is the fundamental execution unit in NVIDIA GPUs. By giving each warp its own sub-tile of the output, we align our work partitioning with the way the hardware actually schedules instructions.

By doing so, each warp can execute independently. If one warp stalls on memory, others can continue executing, which keeps warp scheduler slots full and reduces idle cycles.

From Simon's blog

From Simon's blog

Control over shared memory access

Warp tiles keep each warp’s footprint compact and the per-lane strides simple and repeatable. That makes it easier to design bank-friendly layouts. Spoiler alert! that’s why SMEM load conflicts didn’t show up for this kernel.

Improved register cache locality

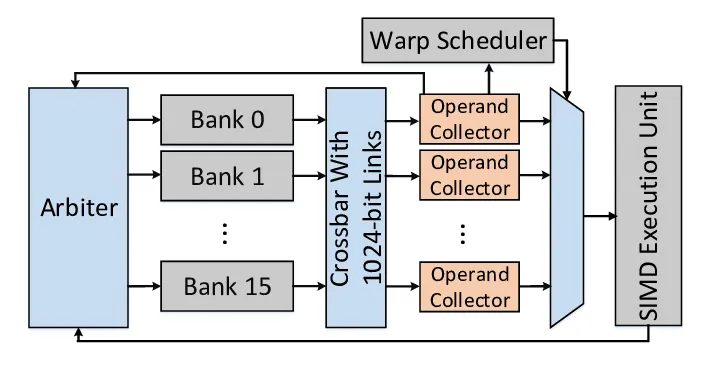

The register file (RF) inside each Streaming Multiprocessor stores per-thread variables. On Hopper, it’s split into multiple single-ported banks (similar to SMEM banks!). A bank can only serve one access per cycle. If two threads in the same warp try to read from the same bank in the same cycle, the accesses are serialized. This is called a bank conflict as well but for registers and it increases the time it takes to fetch operands for an instruction. Unfortunately NVIDIA’s profiling tools don’t provide metrics for these conflicts so it is hard to verify whether that was something we actually improved in this kernel.

Between the RF and the execution units are Operand Collector Units (OCUs) Paper: BOW Breathing Operand Windows to Exploit Bypassing in GPUs . Each OCU fetches source operands from the register banks and stores them in a small buffer, with space for three 128-byte entries. If an operand is needed again soon, it can be served directly from this buffer instead of going back to the main RF. This avoids both bank conflicts and extra RF traffic.

Warp tiling helps here because each warp works on a small, fixed sub-tile of the output matrix, so it tends to reuse the same registers repeatedly in the inner loop. This makes bank conflicts less likely and increases the chances that operands can be reused directly from the OCU buffer.

Again this is a speculation, Idk really if it makes a difference, but it seems plausible.

The main parts of the code that changed looks like this:

// Iterate over the shared dimension of the SMEM tiles

for (int i = 0; i < TILE_SIZE_N; i++)

{

// Load slice at current i iteration in sharedA's register

for (int wSubRow = 0; wSubRow < WARP_STEPS_M; wSubRow++)

{

uint base_row =

(warp_row * WARP_TILE_M) +

(wSubRow * WARP_SUB_M) +

(ty * ROWS_PER_THREAD);

// Each thread loads ROWS_PER_THREAD into the register

for (int row = 0; row < ROWS_PER_THREAD; row += 4)

{

const float4 va =

reinterpret_cast<const float4*>(

&sharedA[i * STRIDE_A + base_row + row])[0];

reg_m[wSubRow * ROWS_PER_THREAD + row + 0] = va.x;

reg_m[wSubRow * ROWS_PER_THREAD + row + 1] = va.y;

reg_m[wSubRow * ROWS_PER_THREAD + row + 2] = va.z;

reg_m[wSubRow * ROWS_PER_THREAD + row + 3] = va.w;

}

for (int wSubCol = 0; wSubCol < WARP_STEPS_K; wSubCol++)

{

uint col_base =

(warp_col * WARP_TILE_K) +

(wSubCol * WARP_SUB_K) +

(tx * COLS_PER_THREAD);

// Each thread loads COLS_PER_THREAD into the register x 4 times in our case since WARP_STEPS_K = 4

for (int col = 0; col < COLS_PER_THREAD; col += 4)

{

const float4 vb =

reinterpret_cast<const float4*>(

&sharedB[i * STRIDE_B + col_base + col])[0];

reg_k[wSubCol * COLS_PER_THREAD + col + 0] = vb.x;

reg_k[wSubCol * COLS_PER_THREAD + col + 1] = vb.y;

reg_k[wSubCol * COLS_PER_THREAD + col + 2] = vb.z;

reg_k[wSubCol * COLS_PER_THREAD + col + 3] = vb.w;

}

}

// Compute outer product

for (int wSubRow = 0; wSubRow < WARP_STEPS_M; wSubRow++)

{

for (int wSubCol = 0; wSubCol < WARP_STEPS_K; wSubCol++)

{

for (int im = 0; im < ROWS_PER_THREAD; im++)

{

float fixed_temp =

reg_m[wSubRow * ROWS_PER_THREAD + im];

for (int ik = 0; ik < COLS_PER_THREAD; ik++)

{

float out =

fixed_temp * reg_k[wSubCol * COLS_PER_THREAD + ik];

int out_idx =

(wSubRow * ROWS_PER_THREAD + im) *

(WARP_STEPS_K * COLS_PER_THREAD) +

(wSubCol * COLS_PER_THREAD + ik);

thread_results[out_idx] += out;

}

}

}

}

}

}

__syncthreads();

A += TILE_SIZE_N; // Move right

B += TILE_SIZE_N * K; // Move down

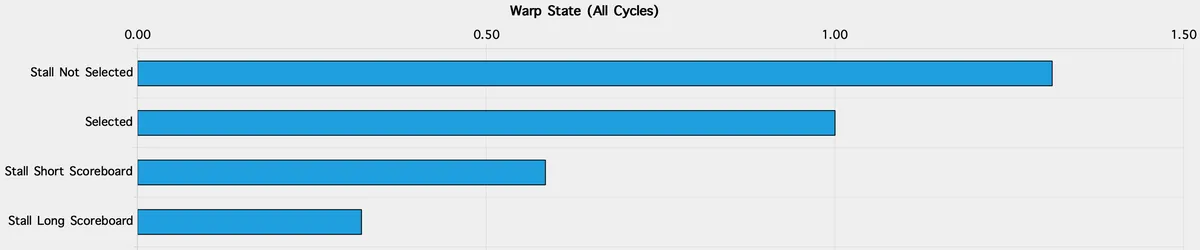

I tested out this kernel before and after padding:

Unpadded warp tiling

- Compute: SM busy 74%, FMA the top pipe (64% of active cycles), executed IPC ~2.97.

- Memory: ~372 GB/s, L1/TEX hit ~4.3%, Mem Busy ~55%.

- Conflicts: Shared stores reported ~4-way average bank conflicts; shared loads were not flagged.

- Pressure/occupancy: ~165 registers per thread → achieved occupancy 18%; scheduler shows alot of “not selected” gaps (33% of inter-issue cycles).

Padded warp tiling

- Compute: SM busy ~75–76%, executed IPC ~3.03–3.04 (slight uptick).

- Memory: ~394–396 GB/s, L1/TEX hit rises to ~7–9%, Mem Busy ~52%.

- Conflicts: Shared stores drop to ~2.5-way on average. Shared loads still not flagged.

- Pressure/occupancy: ~167 registers/thread, achieved occupancy still 18%; “not selected” stalls remain a noticeable slice (31%).

So as a recap. In this warp-tiling kernel, padding mainly helped the store path (the transpose writes into sharedA), which matches the store-conflict counters dropping from about 4.0 to roughly 2.5-way. Load conflicts were not the issue here, unlike the earlier vectorised kernel. Two things quietly helped on the load side without us doing anything fancy: we use COLS_PER_THREAD = 4, which spreads sharedB lanes across more bank groups, and the warp-local sub-tile keeps lane patterns less aliasy. Together that’s why the profiler did not flag shared-load conflicts in either the unpadded or padded runs.

What still holds us back is elsewhere. Register pressure keeps achieved occupancy around ~18%, which shows up as “not selected” scheduler stalls. And we’re still broadly compute-bound (FMA busy in the high-60s) with memory ~52%, so squeezing a few extra GB/s won’t move the needle as much as overlapping copies with compute or trimming registers to buy another resident warp.

Kernel 7: Tensor Cores (Async TMA + WGMMA)

📝 Important Note: Starting from this kernel I am flipping dims making

A = MxKandB=KxN. The reason for that is later tensor core instructions expect matrices to be in that format. The logic in all previous kernels remain the same its just a naming convention. In future iterations, I will change all code and diagrams above for consistency.

We briefly mentioned the Tensor Cores component in H100 in the intro. Let's now look closely at how they operate and how we can use them to significantly increase our performance. By the end of this kernel, we should see performance skyrocket, so let's wait and see!

One of the most significant advancements in NVIDIA’s recent GPU architectures is the introduction and evolution of Tensor Cores. They are the main reason one would purchase high-end GPUs for serious parallel compute. They weren't always present; they were first introduced with the Volta architecture (V100). This means that all of our previous kernels, optimised for standard CUDA cores, represent the state-of-the-art for pre-Volta architectures.

Tensor Cores fundamentally change the GPU's computational model. They are specialised engines purpose-built to accelerate matrix multiplication and accumulation (MMA).

Unlike CUDA Cores that execute simple, scalar instructions (like

a @ b + c), Tensor Cores execute a single instruction that performs an entire matrix operation, such asD = A @ B + C. This architecture is often compared to a Complex Instruction Set Computer (CISC)A single CISC instruction can perform several low-level operations in one step, such as loading a value from memory, performing an arithmetic calculation, and writing the result back to memory. By contrast, RISC architectures use very simple instructions that each perform only one basic operation at a time. .Power Density: This CISC-like approach is key to their massive speed. Operating on large blocks of data per instruction dramatically reduces the per-operation overhead (like instruction decoding).

For example:

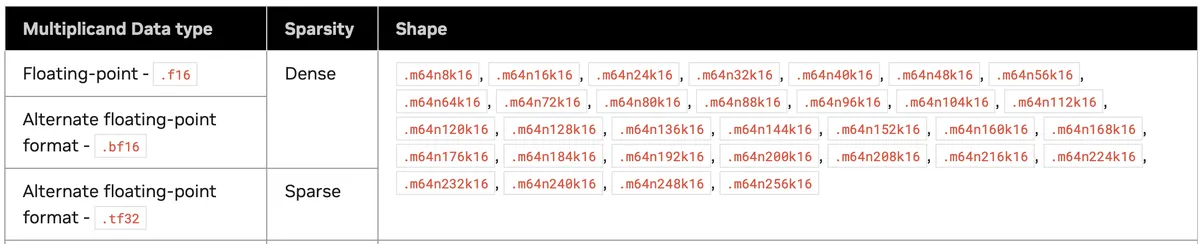

The Warp Group Matrix Multiply and Accumulate (WGMMA) instruction, which we will use later, is written as wgmma.mma_async.sync.aligned.m64n64k16.f32.bf16.bf16. Those m64n64k16 denote our matrices dimensions. The outer dimensions are m and n, these come first and last, and the shared inner dimension for the accumulation, k, is in the middle. This complex instruction calculates D = A @ B + C for matrices A, B, and the accumulator C (where C is often the same physical matrix as D). Multiplying these out, we see that this instruction performs 64 * 16 * 64 = 65,536 multiply-accumulate (MAC) operations.

Compare that to our CUDA cores-only warp tiling kernel:

float out = fixed_temp * reg_k[...]; // multiplication

thread_results[out_idx] += out; // addition (accumulation)

We did only 1 MAC per instruction (FMA). To complete the same work, the CUDA Cores would need to execute 65,536 FMA instructions. In WGMMA, one warp group (128 threads), one WGMMA instruction performs all 65,536 MACs.

Let's step back for a moment as we introduced two new concepts the WGMMA and warp-group. These concepts are unique to the Hopper architecture and did not exist on earlier GPUs. To understand why they matter, and why we will rely on WGMMA in this kernel, it helps to look briefly at how tensor cores were programmed before Hopper and how the programming model evolved to this point.

Before Hopper, the usual way to program tensor cores was through the WMMA API (Warp Matrix Multiply Accumulate). This interface was introduced with Volta and carried through Turing and Ampere as nvcuda::wmma. It provided a high level abstraction to utilise tensor cores, and the API handled most of the internal details.

NVIDIA later exposed the underlying tensor core instructions directly. This introduced the MMA PTX instructions on Turing and Ampere. These also operate at the warp level, where 32 threads cooperate to perform smaller MMA operations on matrices. To feed these instructions correctly the architecture added a special warp wide load instruction called ldmatrix, which pulls the required packed fragments from shared memory into registers. At this stage a typical tensor core kernel followed a clear pattern inside each warp: use ldmatrix to load fragments of A and B from SMEM, issue one or more mma.sync instructions, and then write out the accumulated results.

With Hopper, tensor operations scale up from the warp level to the warp group level, where 128 (32*4) threads cooperate on a single, much larger MMA. These instructions no longer work on small warp sized tiles, but instead operate on significantly larger blocks of A and B that must already be present in shared memory. Since WGMMA expects these tiles to appear in a specific swizzled layout, a natural question arises.

How do we place the required matrix tiles into shared memory in the exact format that WGMMA expects, and do so quickly enough that the tensor cores never sit idle?

Thats where the Tensor Memory Accelerator (TMA) we talked about in the intro comes in.

On the H100, TMA solves the data bottleneck by acting as a dedicated, parallel copy engine:

Bulk Loading: It moves entire 2D tiles (blocks of

AandB) from GMEM to SMEM using just a single hardware command.Asynchronous Transfer: Crucially, the transfer runs in the background. This allows the Tensor Cores to work through current data while the TMA is already fetching the next 2D tiles for the next iteration.

The "bulk loading" part hides a lot of complexity that used to be our problem as programmers. In our previous kernels, we had to write tedious code to tell each thread exactly which element(s) to grab. Now, manual indexing is offloaded to the hardware. We no longer have to micromanage threads to load specific elements from GMEM to SMEM.

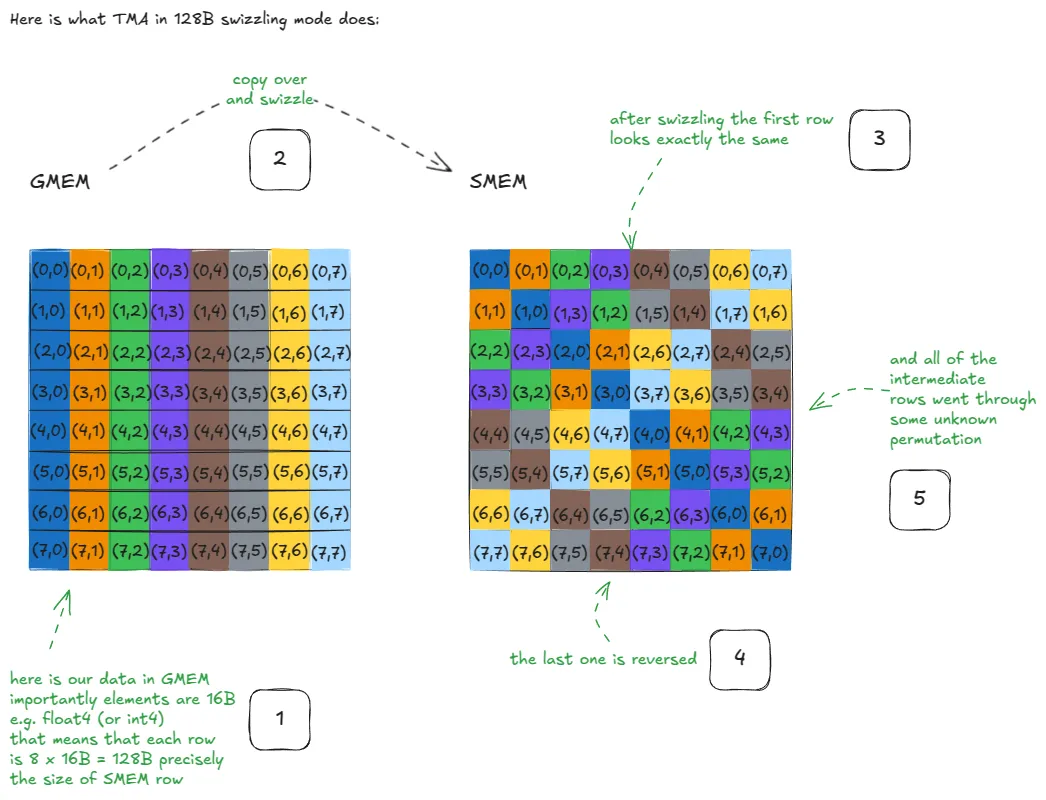

Additionally, we won't need to manually handle padding to avoid SMEM Bank Conflicts like we did before. The hardware automatically applies what’s known as Swizzling. This layout is complex to code by hand, so fortunately, NVIDIA implemented these patterns directly into the TMA. All we need to know is that bank conflicts are essentially handled for us "for free". For more details on the "how" behind the swizzling patterns, Aleksa's post goes into great depth. Here is the figure he used to showcase what a swizzled copy into SMEM looks like at a high level:

To use the TMA, we need to do three main steps:

- Construct tensor maps for our matrices

A&B(On the host). - Trigger TMA operations from the kernel (usually issued by just a single thread in the block).

- Synchronise using specialised Shared Memory barriers.

Tensor Maps

A Tensor Map is a hardware-interpretable descriptor. It describes the shape, layout, and stride of a tensor in memory, allowing the TMA to move entire multi-dimensional tiles without any thread-level address computation.

Unlike earlier kernels, we don't pass raw pointers like const float* A as kernel arguments. Instead, we pass pointers to CUtensorMap descriptors. This is a CUDA Driver defined structure that encodes the full metadata of the matrix (its shape, strides, and its swizzle pattern, allowing the hardware to fetch tiles directly.)

To create these maps, we use the cuTensorMapEncodeTiled function from the CUDA Driver API

At the bottom of the stack sits the CUDA Driver API. It provides the most fine-grained control over the GPU, but is also more verbose and complex. Low-level operations such as tensor map creation are exposed explicitly at this level.

On top of the Driver API sits the CUDA Runtime API, which wraps much of this functionality and offers a higher-level interface. For example, cudaMalloc in the runtime API is a thin wrapper around the driver API’s cuMemAlloc.

Built on top of both APIs are CUDA libraries that provide highly optimised kernels for general and domain-specific workloads, such as cuBLAS for linear algebra and cuDNN for deep neural networks. In practice, most code uses the runtime API, but some Hopper features, such as TMA tensor maps, are currently only exposed through the CUDA Driver API.

. This function takes our description of the matrix and packs it into a 128B hardware descriptor that the TMA engine understands. Because the TMA hardware is so specialised, this 128B object must be aligned to a 128B boundary in memory, or the hardware won't even be able to read it!

The process looks like this:

- Allocate memory for the tensor map on the device using

cudaMalloc. - Encode the map on the Host (CPU) using the Driver API.

- Copy the map from Host to Device using

cudaMemcpy.

In the following code snippet, we perform these steps as follows:

template <const uint BlockMajorSize, const uint BlockMinorSize>

__host__ static inline CUtensorMap *

create_and_allocate_tensor_map(bf16 *tensor_ptr, uint blocks_height, uint blocks_width) {

CUtensorMap *tensor_map;

// Allocate device memory for the tensor map descriptor.

CUDA_CHECK(cudaMalloc((void **)&tensor_map, sizeof(CUtensorMap)));

// Register the tensorMap in our device memory pointers

// resources.add_device_ptr(tensor_map);

// Create on host

CUtensorMap tensor_map_host;

create_tensor_map<BlockMajorSize, BlockMinorSize>(&tensor_map_host, tensor_ptr, blocks_height, blocks_width);

// Copy descriptor to device

CUDA_CHECK(cudaMemcpy(tensor_map, &tensor_map_host, sizeof(CUtensorMap), cudaMemcpyHostToDevice));

return tensor_map;

}

And the function which encodes the tensor's metadata and actually creates the tensor map looks like this:

template <const uint BlockMajorSize, const uint BlockMinorSize>

void create_tensor_map(CUtensorMap *tensor_map, bf16 *tensor_ptr, uint blocks_height, uint blocks_width) {

// Starting address of memory region described by tensor (casting to void

// as the tensor map descriptor is type-agnostic.)

void *gmem_address = static_cast<void *>(tensor_ptr);

uint num_tiles_major = blocks_height;

uint num_tiles_minor = blocks_width;

// full size of the tensor in global memory (API expects the 5D supported

// tensor ranks to be defined)

uint64_t global_dim[5] = {

static_cast<uint64_t>(BlockMinorSize * num_tiles_minor),

static_cast<uint64_t>(BlockMajorSize * num_tiles_major),

1, 1, 1};

// Define the tensor strides (in bytes) along each of the tensor ranks dims - 1

uint64_t global_strides[5] = {

sizeof(bf16),

sizeof(bf16) * BlockMinorSize * num_tiles_minor,

0, 0, 0};

// Define the shape of the "box_size" -> the tile shapes a TMA ops will load

uint32_t box_dim[5] = {

static_cast<uint32_t>(BlockMinorSize),

static_cast<uint32_t>(BlockMajorSize),

1, 1, 1};

uint32_t elem_strides[5] = {1, 1, 1, 1, 1};

// Create tensor map

CU_CHECK(cuTensorMapEncodeTiled(

tensor_map, CU_TENSOR_MAP_DATA_TYPE_BFLOAT16, 2, gmem_address,

global_dim, global_strides + 1, box_dim, elem_strides,

CU_TENSOR_MAP_INTERLEAVE_NONE, CU_TENSOR_MAP_SWIZZLE_128B,

CU_TENSOR_MAP_L2_PROMOTION_NONE, CU_TENSOR_MAP_FLOAT_OOB_FILL_NONE));

}

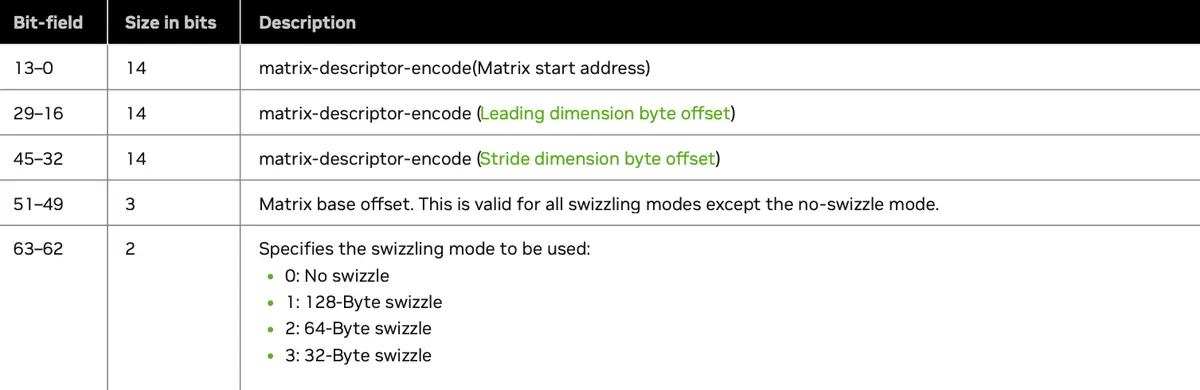

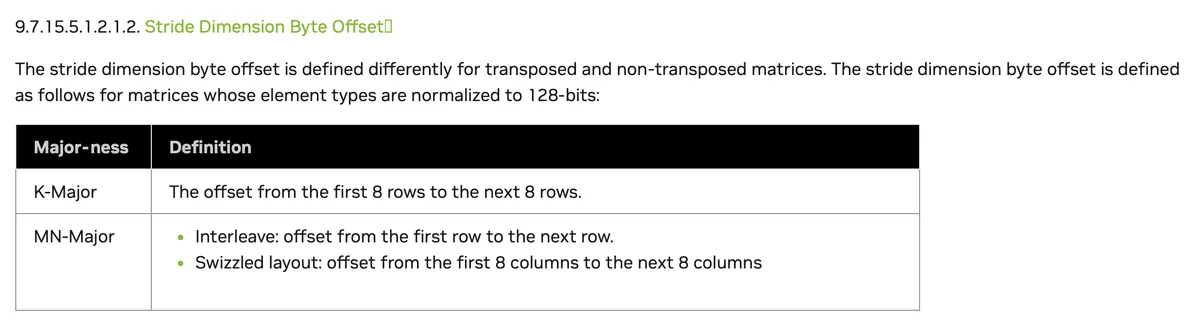

Next, let’s talk about the WGMMA instructions. WGMMA instructions don’t directly use raw byte addresses the way normal loads and stores do, as we did in all previous kernels. Instead, they take a packed 64-bit matrix descriptor that tells the hardware where the matrix lives in shared memory and how it is laid out.

The format of the matrix descriptor is described in the docs as follows:

Originally, addresses are byte addresses. For example, an address at 16384 bytes corresponds to 0x4000 in hexadecimal. If the descriptor were to store raw byte addresses directly, it would very quickly run out of bits and severely limit the addressable range.

Instead, the hardware takes advantage of a key property: SMEM operands used by WGMMA are always at least 16B aligned. This means the lowest 4 bits of any valid address are always zero and carry no useful information.

So rather than storing byte addresses, the descriptor stores addresses in units of 16B. This same encoding is applied not only to the base address, but also to the leading dimension and stride offsets, as we can see in the table above. By doing this, the descriptor can represent much larger SMEM regions while still fitting all required metadata into a single 64-bit value that the hardware can efficiently broadcast and decode across the entire warp group.

A conceptual example of how this encoding happens looks like this:

![matrix-desc-encoding]](https://bear-images.sfo2.cdn.digitaloceanspaces.com/testing-inf1/excalidraw-52.svg)

The encoding logic and descriptor construction must run on the device, since WGMMA matrix descriptors are built inside the kernel. We construct the matrix descriptor in the make_smem_descriptor function.

We also will first need to convert the generic pointer bf16* into a shared-memory address using __cvta_generic_to_shared before encoding it. This step is subtle but essential. WGMMA is not a C++ abstraction, but a low-level hardware instruction that expects a SMEM byte address in a very specific format. A regular CUDA C++ pointer does not explicitly encode its address space (i.e Global, Shared etc.), so we cannot directly use or encode it. Instead, we must first convert the pointer into a concrete SMEM address that the hardware understands, and only then compress and pack it into the matrix descriptor